OIT has been following what’s happening in the evolving world of captioning over the years, and in particular monitoring the field for high quality, affordable services we think would be useful to members of the Duke community. When Rev.com came along, offering guaranteed 99% accurate human-generated captions for a flat $1.00 a minute (whereas some comparable services were well over $3.00/minute), we took note and have facilitated a collaboration with them that has been very productive for Duke. A recent review of our usage shows that a lot of you are using Rev, with a huge uptick in usage over the last couple years, and we’ve heard few if any complaints about the service.

While in general there has been a dismissive attitude toward machine (automatic) transcription, the newest generation technology, based on IBM Watson, has become so good that we can no longer (literally) afford to ignore it. With good quality audio to work from, this speech-to-text engine claims to deliver accuracy as high as 95% or more. IBM Watson isn’t a consumer-facing service, but we’ve been on the lookout for vendors building on this platform, and have found one we feel is worth exploring called Sonix. If cost is a significant factor for you, you might consider giving it a try.

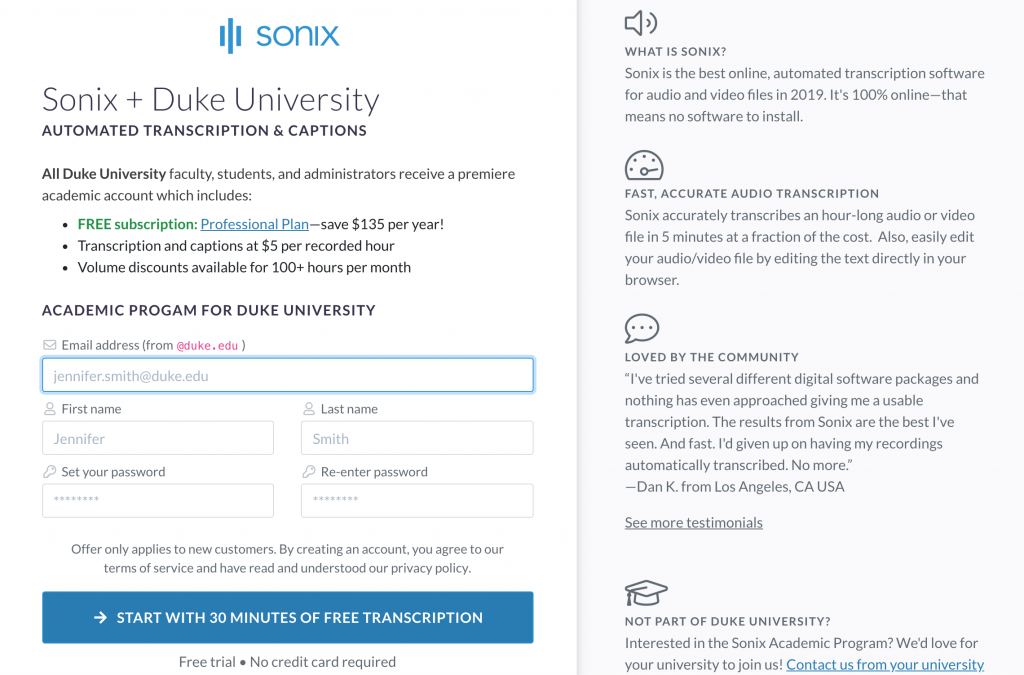

Sonix captioning is a little over 8 cents per minute, and has waived the monthly subscription requirement and offered 30 free minutes of captioning for anyone with a duke.edu email address who sets up their account through this page: https://sonix.ai/academic-program/duke-university.

We are not recommending Sonix at this time, but are interested to hear what your experiences with them are. And we would caution that with any machine transcription technology, a review of your captions via the company’s online editor is required if you want to use this as closed captions (vs just a transcription). In our initial testing Sonix’s online editor looks fairly quick and easy to use.

If you set up an account and try Sonix, please reach out to oit-mt-info@duke.edu to let us know what your experiences are and what specific use cases it supports.